Installing Jekyll

I dislike Ruby, but that might be because I always fly too close to the sun every time I encounter it. Of course there were going to be dragons somewhere along the way.

To get Jekyll up and running, prep the machine:

sudo apt update

sudo apt upgrade

But of course that doesn’t work, because the virtual machine you’re running this on somehow forgot how to do DNS. You try to change it from the US mirrors to another region, but break something along the way. Before starting to hate Ubuntu you double check on the host OS… oh well. The ISP for some reason won’t resolve the mirror DNS. you revert the changes as best you can and set the main DNS to a public one and… wouldn’t you know it… it works.

Time to install some dependencies:

sudo apt install ruby

sudo apt install gcc g++ make

sudo apt install ruby-dev

Then you actually read the install instructions and realise that ruby-full and build-essentials actually exist:

sudo apt-get install ruby-full build-essential zlib1g-dev

Finally we venture into bat county and run the package thingamajig for Ru…

No wait! We need to set where gem packages are stored. Otherwise things break and everyone is unhappy:

echo '# Install Ruby Gems to ~/gems' >> ~/.bashrc

echo 'export GEM_HOME="$HOME/gems"' >> ~/.bashrc

echo 'export PATH="$HOME/gems/bin:$PATH"' >> ~/.bashrc

source ~/.bashrc

Now we can install it!

gem install jekyll bundler

Woohoo! That kind of worked, and we didn’t run into any issues along the way. Let’s use the command-line utility to set up the skeleton, because Jekyll has to have everything:

jekyll new my-awesome-site

Running bundle install in /home/user/my-awesome-site...

Bundler: Fetching gem metadata from https://rubygems.org/............

Bundler: Resolving dependencies...

Bundler: Using bundler 2.3.19

Bundler: Using colorator 1.1.0

Bundler: Using concurrent-ruby 1.1.10

Bundler: Using eventmachine 1.2.7

Bundler: Using http_parser.rb 0.8.0

Bundler: Using ffi 1.15.5

Bundler: Using forwardable-extended 2.6.0

Bundler: Using rb-fsevent 0.11.1

Bundler: Using rexml 3.2.5

Bundler: Using liquid 4.0.3

Bundler: Using mercenary 0.4.0

Bundler: Using rouge 3.30.0

Bundler: Using safe_yaml 1.0.5

Bundler: Using unicode-display_width 1.8.0

Bundler: Using i18n 1.12.0

Bundler: Using sassc 2.4.0

Bundler: Fetching public_suffix 5.0.0

Bundler: Using rb-inotify 0.10.1

Bundler: Using kramdown 2.4.0

Bundler: Using pathutil 0.16.2

Bundler: Using terminal-table 2.0.0

Bundler: Using jekyll-sass-converter 2.2.0

Bundler: Using em-websocket 0.5.3

Bundler: Using listen 3.7.1

Bundler: Using kramdown-parser-gfm 1.1.0

Bundler: Using jekyll-watch 2.2.1

Bundler: Installing public_suffix 5.0.0

Bundler: Fetching addressable 2.8.1

Bundler: Installing addressable 2.8.1

Bundler: Using jekyll 4.2.2

Bundler: Using jekyll-feed 0.16.0

Bundler: Using jekyll-seo-tag 2.8.0

Bundler: Using minima 2.5.1

Bundler: Bundle complete! 7 Gemfile dependencies, 31 gems now installed.

Bundler: Use `bundle info [gemname]` to see where a bundled gem is installed.

New jekyll site installed in /home/user/my-awesome-site.

And then we can run it:

bundle exec jekyll serve

configuration file: /home/user/my-awesome-site/_config.yml

Source: /home/user/my-awesome-site

Destination: /home/user/my-awesome-site/_site

Incremental build: disabled. Enable with --incremental

Generating...

Jekyll Feed: Generating feed for posts

done in 0.722 seconds.

Auto-regeneration: enabled for '/home/user/my-awesome-site'

------------------------------------------------

Jekyll 4.2.2 Please append `--trace` to the `serve` command

for any additional information or backtrace.

------------------------------------------------

/var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve/servlet.rb:3:in `require': cannot load such file -- webrick (LoadError)

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve/servlet.rb:3:in `<top (required)>'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve.rb:179:in `require_relative'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve.rb:179:in `setup'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve.rb:100:in `process'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/command.rb:91:in `block in process_with_graceful_fail'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/command.rb:91:in `each'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/command.rb:91:in `process_with_graceful_fail'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/lib/jekyll/commands/serve.rb:86:in `block (2 levels) in init_with_program'

from /var/lib/gems/3.0.0/gems/mercenary-0.4.0/lib/mercenary/command.rb:221:in `block in execute'

from /var/lib/gems/3.0.0/gems/mercenary-0.4.0/lib/mercenary/command.rb:221:in `each'

from /var/lib/gems/3.0.0/gems/mercenary-0.4.0/lib/mercenary/command.rb:221:in `execute'

from /var/lib/gems/3.0.0/gems/mercenary-0.4.0/lib/mercenary/program.rb:44:in `go'

from /var/lib/gems/3.0.0/gems/mercenary-0.4.0/lib/mercenary.rb:21:in `program'

from /var/lib/gems/3.0.0/gems/jekyll-4.2.2/exe/jekyll:15:in `<top (required)>'

from /home/user/gems/bin/jekyll:25:in `load'

from /home/user/gems/bin/jekyll:25:in `<top (required)>'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli/exec.rb:58:in `load'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli/exec.rb:58:in `kernel_load'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli/exec.rb:23:in `run'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli.rb:483:in `exec'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/vendor/thor/lib/thor/command.rb:27:in `run'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/vendor/thor/lib/thor/invocation.rb:127:in `invoke_command'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/vendor/thor/lib/thor.rb:392:in `dispatch'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli.rb:31:in `dispatch'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/vendor/thor/lib/thor/base.rb:485:in `start'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/cli.rb:25:in `start'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/exe/bundle:48:in `block in <top (required)>'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/lib/bundler/friendly_errors.rb:120:in `with_friendly_errors'

from /var/lib/gems/3.0.0/gems/bundler-2.3.19/exe/bundle:36:in `<top (required)>'

from /home/user/gems/bin/bundle:25:in `load'

from /home/user/gems/bin/bundle:25:in `<main>'

Nope. That didn’t work. Weird. This is why I strongly dislike Ruby. No batteries included. Fortunately some internet citizen at https://github.com/github/pages-gem/issues/752 mentions that some Ruby version doesn’t come with something called webrick. The documentation could have mentioned that…

bundle add webrick

There we go! All up and running!

Poking Around

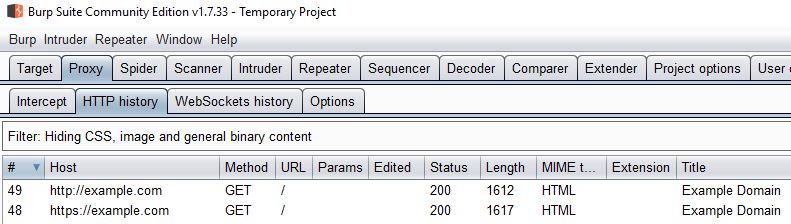

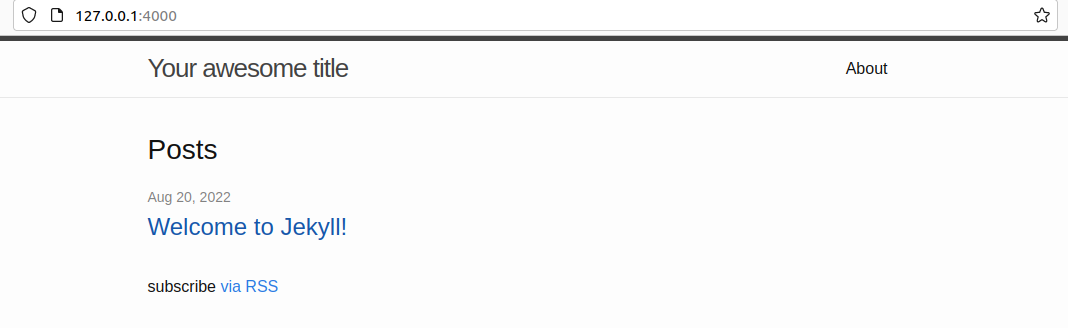

So now when we can actually build and serve it:

We can have a look around:

user@jekyll:~/my-awesome-site$ tree

.

├── 404.html

├── about.markdown

├── _config.yml

├── Gemfile

├── Gemfile.lock

├── index.markdown

├── _posts

│ └── 2022-08-20-welcome-to-jekyll.markdown

└── _site

├── 404.html

├── about

│ └── index.html

├── assets

│ ├── main.css

│ ├── main.css.map

│ └── minima-social-icons.svg

├── feed.xml

├── index.html

└── jekyll

└── update

└── 2022

└── 08

└── 20

└── welcome-to-jekyll.html

9 directories, 15 files

All in all, the documentation is great. In short:

- Pages can be located kind of wherever, but it’s easier to keep them in the root folder for the time being.

- Posts are kept in

_posts. A post can be a blog post, like the one you’re reading at the moment. - Pages and posts can be in different formats, but you’d be weird if you use something other than HTML and MD (markdown).

_sitecontains the built website.

Ah! But notice how the built page contains more files than the root! That is because f- you. If you want to be able to see and edit all of the files, then you need to convert the default theme from being a gem-based theme to being a regular theme. This is why we can’t have nice things.

It seems easy enough reading through https://jekyllrb.com/docs/themes/#converting-gem-based-themes-to-regular-themes, but HOW DO I FIND THE GEM, AND HOW DO I GET THE FILES OUT OF THE GEM?!

Just dive into $GEM_HOME and find it there, somewhere. A gem package is just a folder. Pull the files out of there, place it in the root folder, and then carry on with the guide.

With this we can start poking around, making changes, and seeing what happens. There’s still a few hidden things that require Googling, e.g. certain configuration parameters that automagically make things happen. That’s especially true for the plugins the default theme uses.

Jekyll Build Process

So whenever we serve the page, or simply ask Jekyll to build the site, a few things will happen. The documentation makes sense when you understand it. Until then it’s confusing.

Think of it this way: Jekyll goes through all pages and posts and tries to render them. Posts are essentially pages. Each page has a layout specified. Jekyll finds the layout in _layouts, and continues.

A layout can request to be placed within another layout, or not. It can include sections from _includes (that folder is meant to have snippets of content that is re-used). It can get pretty wild. Though that’s all in a day’s work for a templating system.

Jekyll uses Liquid.

Liquid makes sense, and it’s possible to get things done by looking at the default Jekyll theme in conjunction with some searching. Remember, one pair of squiggly brackets {% denotes code %}, and two {{denotes content}}.

Fun fact, if you want to write {% stuff %} you need to put {% raw %} in front of it so Liquid doesn’t process it and fail horribly. Then you need to terminate it with endraw, which I don’t dare put in curly brackets in this text because then there’s no telling what will happen.

Scrapping the VM and Moving to Containers

I’ve poked around enough, and I got a pretty good understanding of how things are working. For whichever reason the Ubuntu VM I’m using seems to slow down after a while, and sometimes the UI crashes and resets. Not very fun.

I could either edit files via SSH to avoid using the Linux desktop, or I could share a folder on my host with the VM. But both seem a bit like trying to fit a round peg in a square hole.

So let’s move to containers and Docker. Because containers sound cool and VMs are old. And remember, the newest coolest version of Ruby doesn’t have that webrick thing, and we’re not going to build our own god damn container with it. So we’re going to pull an older version and pin that one.

latest is for brave people.

Downloading:

docker pull jekyll/jekyll:4.2.0

Spinning up the image as a container.

docker run --rm -v ${PWD}:/srv/jekyll --publish 4000:4000 jekyll/jekyll:4.2.0 jekyll serve --force_polling

Note that the above is Powershell syntax. --rm removes the container when it exists. -v mounts the folder inside the container. --publish forwards a port on the host to a port inside the container. jekyll/jekyll:4.2.0 is the image jekyll provided by jekyll with version 4.2.0. jekyll serve --force_polling is the command to run inside the container when it starts, where the last part causes Jekyll to check if any file has been updated. The way it normally does it doesn’t work when the host OS is Windows.

It takes a while, but it works like a charm:

Warning: the running version of Bundler (2.2.24) is older than the version that created the lockfile (2.3.19). We suggest you to upgrade to the version that created the lockfile by running `gem install bundler:2.3.19`.

Fetching gem metadata from https://rubygems.org/

Fetching gem metadata from https://rubygems.org/...........

Fetching gem metadata from https://rubygems.org/...........

Using bundler 2.2.24

Fetching public_suffix 4.0.7

Using colorator 1.1.0

Fetching concurrent-ruby 1.1.10

Installing public_suffix 4.0.7

Using eventmachine 1.2.7

Fetching http_parser.rb 0.8.0

Installing concurrent-ruby 1.1.10

Fetching ffi 1.15.5

Installing http_parser.rb 0.8.0 with native extensions

Installing ffi 1.15.5 with native extensions

Using forwardable-extended 2.6.0

Fetching rb-fsevent 0.11.1

Installing rb-fsevent 0.11.1

Fetching rexml 3.2.5

Installing rexml 3.2.5

Using liquid 4.0.3

Using mercenary 0.4.0

Fetching rouge 3.30.0

Installing rouge 3.30.0

Using safe_yaml 1.0.5

Fetching unicode-display_width 1.8.0

Installing unicode-display_width 1.8.0

Fetching webrick 1.7.0

Installing webrick 1.7.0

Using addressable 2.8.0

Fetching i18n 1.12.0

Installing i18n 1.12.0

Fetching em-websocket 0.5.3

Installing em-websocket 0.5.3

Using pathutil 0.16.2

Fetching kramdown 2.4.0

Installing kramdown 2.4.0

Using terminal-table 2.0.0

Using kramdown-parser-gfm 1.1.0

Using sassc 2.4.0

Using rb-inotify 0.10.1

Fetching jekyll-sass-converter 2.2.0

Fetching listen 3.7.1

Installing listen 3.7.1

Using jekyll-watch 2.2.1

Installing jekyll-sass-converter 2.2.0

Fetching jekyll 4.2.2

Installing jekyll 4.2.2

Fetching jekyll-feed 0.16.0

Fetching jekyll-seo-tag 2.8.0

Installing jekyll-feed 0.16.0

Installing jekyll-seo-tag 2.8.0

Using jekyll-sitemap 1.4.0

Bundle complete! 9 Gemfile dependencies, 32 gems now installed.

Use `bundle info [gemname]` to see where a bundled gem is installed.

ruby 2.7.1p83 (2020-03-31 revision a0c7c23c9c) [x86_64-linux-musl]

Configuration file: /srv/jekyll/_config.yml

Source: /srv/jekyll

Destination: /srv/jekyll/_site

Incremental build: disabled. Enable with --incremental

Generating...

Jekyll Feed: Generating feed for posts

done in 9.962 seconds.

Auto-regeneration may not work on some Windows versions.

Please see: https://github.com/Microsoft/BashOnWindows/issues/216

If it does not work, please upgrade Bash on Windows or run Jekyll with --no-watch.

Auto-regeneration: enabled for '/srv/jekyll'

Server address: http://0.0.0.0:4000/blag/

Server running... press ctrl-c to stop.

Would you look at that?

Folders are not Cloud Enough

Who said I was afraid of commitment?

git init

git add -A

git commit -m 'Initial'

Git isn’t cloud, so let’s move it to GitHub. But GitHub is just storage, so let’s use actions to leverage cloud processing power!

Now actions is GitHub’s way of doing things for you when things happen. It seems to follow the standard trigger-action paradigm. However, the way you go about defining the action part was new to me.

GitHub has a guide, but it will essentially create a folder .github/workflows with a YML-file which contains the instructions and trigger.

In essence, this is what I got:

name: Jekyll stuff

on:

push:

branches: [ "main", "preview" ]

jobs:

build:

name: Build site

runs-on: ubuntu-latest

permissions:

contents: read

deployments: write

steps:

- uses: actions/checkout@v3

- name: Build the _site in the jekyll/builder container

run: |

docker run \

-v ${{ github.workspace }}:/srv/jekyll -v ${{ github.workspace }}/_site:/srv/jekyll/_site \

jekyll/builder:latest /bin/bash -c "chmod -R 777 /srv/jekyll && jekyll build --future"

- name: Making the deploy folder

run: mkdir ${{ github.workspace }}/deploy

- name: Putting the _site blag in the deploy folder

run: mv ${{ github.workspace }}/_site ${{ github.workspace }}/deploy/blag

- uses: actions/upload-artifact@v3

name: Archive production artifacts

with:

name: site

path: ${{ github.workspace }}/deploy

On push to either the main or preview branch, GitHub will trigger the action and run it. Each run will take place in an Ubuntu environment and:

- Pull the repository.

- Run a container with the

jekyll/builder:latestimage and ask it to build the site. - Create a new folder with the results; and

- Upload the results as an artefact to GitHub.

You can download the artefact and look at it locally, or host it somewhere. Remember that this build is very brave because it was built with latest. I guess webrick isn’t needed to build, only to serve.

Cloud 2: Electric Boogaloo

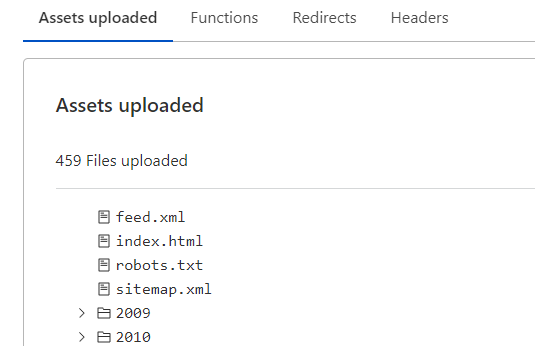

Both GitHub and Cloudflare offer static hosting called Pages. Either one could have worked, but Cloudflare can do more in other areas.

At this point I’ve waded through enough brown goo and I’m not in the mood to fight anymore. Do you know what happens if you make a typo in the action YML-file? You have to correct it of course, which adds another commit to the branch. I’m used to failing fast and improving. Failing at making the action work as intended (or doing something other than catching fire) is slow.

Edit. Save. Commit. Wait. Run. Fail. Repeat.

So I was surprised and happy to see that pushing stuff to Cloudflare was easy. They have an action you can import, and if you tell it your secrets it will work!

- name: Publish

uses: cloudflare/pages-action@1

with:

apiToken: ${{ secrets.the_token }}

accountId: ${{ secrets.the_id }}

projectName: site

directory: ${{ github.workspace }}/deploy

gitHubToken: ${{ secrets.GITHUB_TOKEN }}

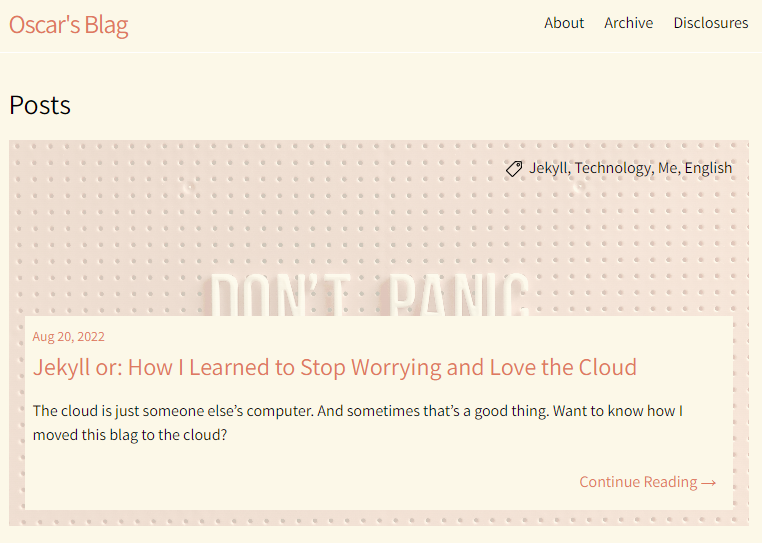

Would you look at that? #2

Finally we have to add my website as well. So far we’ve only been building and deploying the blog section. I’m not going to learn how to integrate my website with Jekyll, leave me alone.

Fortunately that project is already in git. How to get that repo in here, in this build machine, is a good question. It’s super-duper easy if the repo is public, but it’s not. It took me longer than I’d like to admit to figure out how to do it.

What you should do is create a deployment key for the remote repository, and then use the checkout action. With the correct parameters it will get the remote repo instead of the one which triggered the build:

- uses: actions/checkout@v2

with:

ssh-key: ${{ secrets.somekey }}

repository: someuser/somerepo

path: ${{ github.workspace }}/web

The contents of the web folder can then be moved into deploy, which is already set up to publish. Updating the action YML-file causes a commit, which in turn triggers the script. Wait a few minutes, and voilà!

Final Thoughts

The whole point of the cloud machine… is lost if you keep it a secret!

Migrating the content took a lot longer than I anticipated, as did the design (what colour is the background?!). Learning to use Jekyll and wrestling with Ruby was not that difficult.

The thing that made the project fun and novel was using new technology. Exploring the actions CI/CD in GitHub, their build system, static hosting at Cloudflare, and how to tie it all together automatically.

TL;DR I got mad at my hosting provider so I decided to over-engineer a new blog.

]]>